I have seen many posts about MIDI quantization, but I haven’t found any information on how can I quantize audio on Ardour. In DAW softwares like Pro Tools, Sonar and others, I can use Elastic Audio or Audio Snap to quantize audio tracks. How is it possible to have something like this working on Ardour? Is there any feature for audio quantize? By the way, if it’s not possible yet, I would like to help you to implement such feature in Ardour.

Ardour doesn’t allow this. We have the feature planned out. This would be a very substantial task to take on for someone new to Ardour development.

BTW, I don’t think it makes sense to use the term “quantize” for this operation. We already have timestretch which allows you to stretch/shrink audio to fit a precise beat/bar durations, which is a part of what Elastic audio is about. But “quantize” generally means to align distinct data points (e.g. MIDI notes) to specific time points (typically based on a musical grid). Doing that with audio is really a different operation in my mind.

I’m not entirely sure if this approach will give you the precise result you desire, but you can easily:

- Edit > Strip Silence

- Selected Regions > Snap Position > Snap Position to Grid

This works best with transient sounds like drums or rhythmic guitar strumming or rhythmic vocal styles, etc, but I’ve also yet to see the above mentioned proprietary DAW’s properly read non transient material with respect to the fact that without transients to align, what does “quantizing” even mean?

there’s something called tempo-mapping which helps aligning an audio track – perhaps this method for addressing recorded offsets can be a valuable resource.

https://www.youtube.com/watch?v=rrr9lr_Pbkg

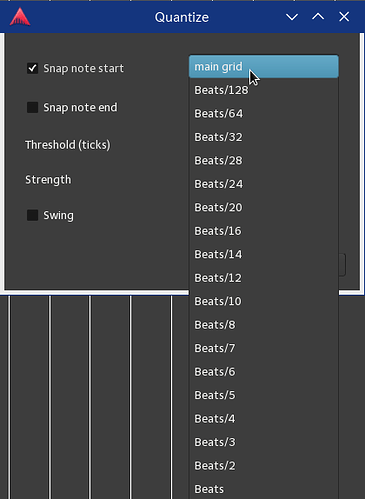

it’s another way similar to “rounding” to a particular beat mark. If your notes are not landing say in a quarter-beat, which some are and some aren’t, you can select a region (midi) and choose ‘quantize’. The end-result is an edited edition of the region in which each note is either moved left or right from their original position depending on how the user sets up the details of thresh-hold and strength of the quantizing. The reason why sometimes a musician may not want to have 100% accuracy and keep things “near” a beat mark, is to keep the humanization-offset without the midi note data sounding too robotic.

It’s been 4 years.

Any progress on this feature?

I haven’t tried Ardour in a while. Mainly because I was missing this specific feature that I used all the time in production nowadays.

I still donate monthly though, and hope to see the day where I can get things done as easy and fast as I do in Logic Pro.

This topic was automatically closed 90 days after the last reply. New replies are no longer allowed.