Hi everyone! I’m a long-time lurker here (since 2008 or so) and big time Ardour fan, and I have used it as my sole replacement for Nuendo and Cubase ever since I learned about it. And apologies in advance for the novel, normally I am much more terse with my communication but I have a lot to share today!

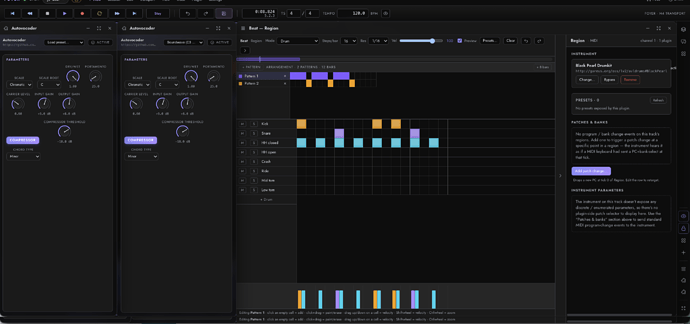

So the thing with the recent leap in capabilities with generative AI is that it is super easy to nerd-snipe yourself - this started two weekends ago with a self-imposed challenge of making a polished auto-vocoder lv2 plugin with Claude Code because I was having trouble finding one that I liked. About 30 minutes later I had one working, I was super excited, and then this challenge escalated into “can I wire an AI agent directly into Ardour, and can I make Ardour kid-friendly since my children love playing with the audio effects on a live monitored mic?”.

The original challenge of putting an AI agent into Ardour was back burnered (I do this for my day job so it isn’t all that novel), as was making Arour kid-friendly, but all of the plumbing is now in place to make either of these relatively low lifts. And what came out of this week-long AI-induced manic episode is:

Foyer Studio

Named for this, the little studio I built in the foyer for my kids to learn audio engineering (and also an etymological link with the words ardour and foyer having a common latin roots that literally mean flame/fire/heat/burning, and hearth/fireplace respectively):

This would not have been possible without all of the work Paul Davis, Robin Gareus and all of the others put in over the last 20+ years on JACK and Ardour, so go donate!

So what is this?

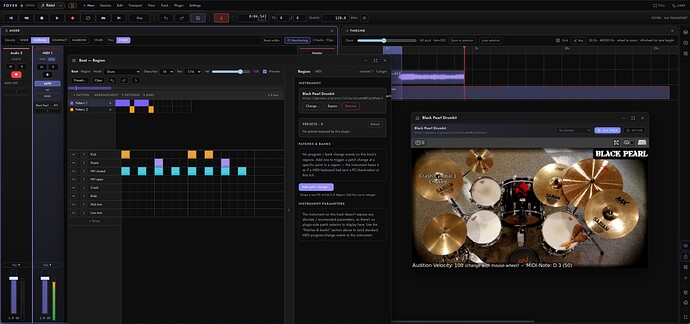

It’s a web-native, DAW remote projection control surface with real-time collaborative tooling (e.g. multiple people patched into the same session anywhere in the world) that currently has one back-end: Ardour. The user interface runs in a web browser and it can be shared with session collaborators over CloudFlare tunnels for low-ceremony real-time remote collaboration. The use cases I envisioned are:

- Sharing a mix in progress with a performer and being able to make changes in realtime with their feedback, without them being physically present (yes I know screen shares with audio exist, but this was more fun!)

- A performer laying a track remotely

- Real-time collaboration on the mix, effects, timeline, instruments, etc.

- Providing accessible hackability to the DAW’s user interface - by reprojecting the state of a DAW like Ardour to a web-based UI, tons of possibilities open up for being able to satisfy use cases you just couldn’t do in a pro-grade DAW UI, like making Ardour a MIDI-only application ala Cakewalk 3, making a transport remote for your phone so you can engineer your own peformances with ease, making a kid-friendly touch-friendly interface, or:

- Extending the core DAW with new feature compositions - for example, I created a simple beat and piano-roll sequencer that will generate MIDI from the sequencer and it saves the sequencer data in the region’s data extension in the .ardour file, because my 8 year old loves playing with Hydrogen and frankly so do I

Here are some videos of it in action:

Installation from scratch + tracking from browser in a new project

Loading an existing project, adding beats and music with sequencer, instrument selection

Remote collaboration over CloudFlare tunnels (free, no account required!), plugins, floating and tiled window management, right dock with tear-out FABs

How is it built?

Let me be straight up - this project was vibe coded AF - no way does one person with minimal domain knowledge of the inner-workings of a DAW build something like this in their free time from ideation to fully functioning prototype over the course of a 9 days without having Claude Opus (and Kimi K2.6 too) do the heavy lifting. Let me rephrase that - this was agentically-engineered. I do have strong opinions on the tech stack used, the architecture, the design, look and feel, and the features, so those all came from me. The implementation and execution of those opinions is all clankers.

The core app consists of a Rust/axum API foyer that speaks to the Ardour back end via an IPC (MsgPack over a unix socket) - this IPC carries a DAW-agnostic payload consisting of commands, state events, mutations, lifecycle events, audio streams, object properties (e.g. mixer channels, automation, midi rolls), session management and more from a “shim” - basically a C++ control surface plugin compiled against libardour.so - the shim translates between foyer’s agnostic format to Ardour’s internal data structures, function calls, etc. and taps into the internal audio streams of Ardour so we can get audio in and out.

AI-generated text diagram coming in 3…2…1:

Ardour (or any DAW)

│

▼

┌────────────────────────┐ Unix socket, MessagePack framing

│ C++ shim │ ───────────────────────────────┐

│ (libfoyer_shim.so) │ │

│ · event translation │ ▼

│ only — no UX logic │ ┌──────────────────────┐

└────────────────────────┘ │ Rust sidecar │

│ · foyer-server │

┌────────────────────────┐ │ · foyer-backend-* │

│ Web UI (Lit + Tailwind)│ ◄── WS / HTTP ────►│ · foyer-schema │

│ · three-tier split │ │ · foyer-ipc │

│ · no shipping bundler │ └──────────────────────┘

└────────────────────────┘ │

▼

┌──────────────────────┐

│ Optional: │

│ foyer-desktop (wry) │

└──────────────────────┘

The same architecture could apply to any DAW provided it has a rich-enough SDK or is hackable enough, but Ardour has my ![]() so that was my target; if anyone wants to try to make a shim for Bitwig or REAPER I’ll be accepting PRs.

so that was my target; if anyone wants to try to make a shim for Bitwig or REAPER I’ll be accepting PRs.

The UI lives on the other side of the foyer API and speaks to it via standard http for static asset service like the UI’s JavaScript, HTML, CSS and image assets, and over websockets for streaming the state and audio IO to the UI, using the same serialized structures as the shim speaks but in JSON payloads. Audio is sent via an Opus-compressed stream by default, with the option to switch to uncompressed streaming.

The UI was made with zero-build JavaScript using Lit as the primary framework (e.g. standard web components, shadow DOM), Tailwind CSS, and a few vendored helper libraries, and that’s really it. It is intentionally super hackable, and the core logic for audio and binding event IO over websockets is in a stand-alone library with no UI/DOM at all, then the rest of the UI lives in downstream-dependent projects, totally separated. The UI is dumped to the folder ~/.local/share/foyer/web on first launch and can be updated and served out in real time.

There are a handful of other novel things architecturally, like built-in tunnels (CloudFlare and ngrok, with ngrok untested) for instant sharing of a session over a tunnel using RBAC-based single use user accounts with scoped permissions (e.g. viewer, performer, session controller, admin) that can be shared in 3 clicks for the first one, 1 click for each additional collaborator; relay chat and push-to-talk audio conferencing; remote audio ingress so a performer can lay a track from afar (with self-monitoring disabled because of the additional latency); an advanced tiling window manager in the shipping UI; and some other things that are escaping me at the moment.

Licensing: the C++ shim links libardour and is GPL-2.0+ as required. The Rust sidecar and web UI sit above the IPC boundary and ship under Apache-2.0.

What actually works?

Anything you saw in the demo video was legit - not production-grade, but usable as seen (“it works on my machine” haha). Unfortunately I wasn’t able to figure out how to record the browser audio with the screen recordings on a Mac, but it was mostly me singing into a dell speakerphone so you didn’t miss much. I will open issues on the GitHub to track things that are broken and fix them as I can. There are rough edges, weird quirks, and other things that will need to be sorted over time.

There are a ton of features in Ardour, and I am targeting the most common/visible subset of available features to make something compelling but pragmatic that taps into that ecosystem; it would be insane to try to chase 20+ years of features off the bat. My goal isn’t to recreate Ardour in a web browser, but to compliment it with new collaboration abilities as well as unlock a whole world of UX possibilities and feature extensibility.

What is left to do?

The agentic harness specific to a DAW was a big one I wanted to do, I just had to build all of this other stuff around it to make it useful and practical. There is a nice set of MCP tools being added into Ardour’s core which will definitely be useful for an agent, and I have additional tools I’d want to add like spectrography, waveform visualizations and audio snippets (for multi-modal models), plain-English descriptions of mixer states, plugin states, track states and more, and UI integration.

Others include:

- A touch-friendly UI, both big and small (e.g. transport remote from your phone)

- A kid-friendly UI

- Exposing additional Ardour features that were omitted (punches, cross-fades, z-indexes for track stacking, markers, dynamic tempos and time signatures, splicing, snapping, video integration, tons of stuff on the instrumentation and plugins side)

- Test Linux installation (MacOS thoroughly tested)

- MIDI instrumentation is missing some critical functionality like a functioning patch selector, and there are a few bugs with the piano roll, synth instruments and region resizing that need a once over to get this usable; drum kits seem to work well though

- Make sure this works on real hardware via remote, not just the 48khz audio from my MBP

- Keyboard-first session navigation (limited to a handful of functions now)

- Multi-window support for multiple monitors

- Tighter session recovery/reattachment logic

- Reliable attachment to running GUI instances of Ardour

- More advanced UI-side presets management, templates, power tools for reducing clicks

- Front-end visualizations - with WebGL and WebGPU as a first-class APIs we can create rich accelerated visualizations that look and feel awesome

- Building, packaging, and supporting more platforms (currently just Linux and MacOS arm64)

- Temporaly-accurate syncing of audio and controls (e.g. delay control movement by latency incurred from the audio stack)

- Temporaly-accurate offsetting for regions recorded remotely via foyer audio ingress

So how do I run it?

curl -fsSL https://raw.githubusercontent.com/hotspoons/foyer-studio/main/install.sh | bash -s -- --latest-ci

(Currently Mac arm64 and Linux arm64/amd64 are supported, pulls latest successful build from main branch)

Then source your shell’s rc file or spawn a new shell, then run foyer serve to launch it on http://localhost:3838. The config file, web ui, and more lives under ~/.local/share/foyer and will be present after the first launch.

NOTE: the shim is currently built against the Ardour 9.2 ABI and will not be compatible with other versions of Ardour. If you want to build for a different tag, branch or ref, follow these instructions, though code changes may be required in the shim if the ABIs called from the shim differ in the target ref.

For development, clone the repository and open the dev container.