I’m not sure, I will test it and report back

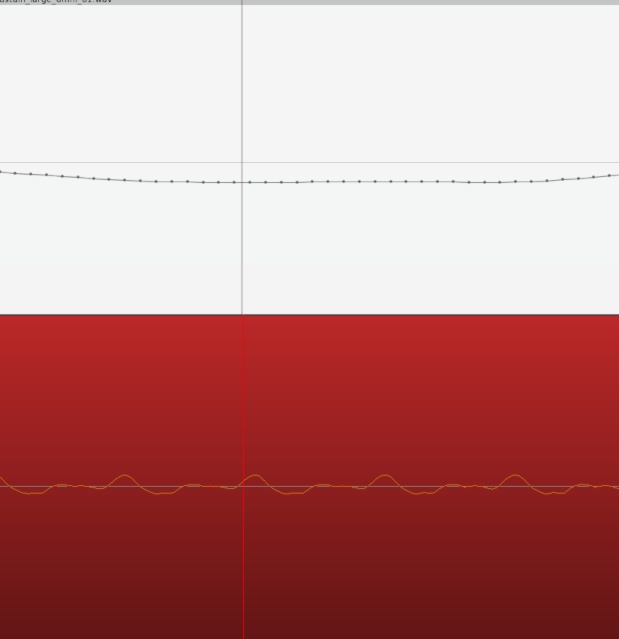

The normalization of the whole file is not so useful because of the aforementioned dynamic range. However I just played around with the gain boost/cut and pushing this so that the loudest peaks go beyond 0db allows me to see the quietest peaks really well and effectively gives me the same view I would get from vertical zoom. The missing piece was I needed to go back to linear waveform view because in logarithmic view the noise is too prominent. I’ll explore making a lua script to drop the track’s gain as the region is boosted so that I can play back if needed without destroying my ears.

One advantage/disadvantage (depending on situation) to this approach is that the effective zoom of each region is independent, whereas with “regular” vertical zoom all regions would be zoomed equally.

In my view, this would be problematic as a workflow as would be @paul’s normalizing idea. You shouldn’t need to mess to with volume in order to adjust the waveform zoom. For a classical live concert, normalizing each individual piece isn’t an option as it all should have the same room tone level. Also, when I’m cutting I’m always wanting to quickly listen back to double-check I’ve not cut in the wrong place. Ear damage/clipping distortion are big no-nos  I think the answer is still a proper waveform zoom feature. Samplitude/Sequoia, Reaper, Studio One, Pyramix, Cubase, Logic Pro X etc all have it.

I think the answer is still a proper waveform zoom feature. Samplitude/Sequoia, Reaper, Studio One, Pyramix, Cubase, Logic Pro X etc all have it.

I agree 100%, especially since it’s apparently not difficult to implement.

read back, I did not say that. I said it’s a deliberate choice.

The reason why it’s not present is has nothing to do whether it’s complicated to implement it. I’d actually expect it to be time-consuming to implement it right.

I deliberately reject it because waveform is is not a true representation of the signal (really it should be a lollipop graph, or up-sampled) and operations should not depend on a user interpreting it. Rather tools should be added to facilitate workflow that users currently use a waveview as work-around for.

If someone would add this feature, many follow-up issue will also have to be addressed. e.g. There would need to be clear indication about the y-axis range of every track. Also recording must reset the scale to clearly indicate clipping, etc.

While I’m in the camp that has not had any issues with log view for all my classical cutting, I have seen enough examples to suggest that something is needed. The question for me is not of “true” representation but a visual zoom, however unscientific to assist in cutting. The feature is in enough of the mainstream DAWs for me to consider it a standard (a little like others think of the vertical MIDI velocity “sticks”). I can speak of my experiences in Sequoia…When the waves were too tiny, I increased zoom via a shortcut and once all cutting was done in the problem area, I would just press another shortcut to reset the zoom. Default should obviously be 100% but I was never confused about y-axis range as if zoom was engaged everything looked stretched and it was obvious. For recording, I’m definitely looking at meters, not the waveform but that might just be me.

Why not add a lollipop graph? I don’t mind the tool as long as it makes the workflow easier.

I hear you but, again, it feels like something of a hack versus just implementing the same tool as other DAWs. There may be scientific reasons that waveforms are not great (does this apply to our regular waveform view, too?) but not once have I used another DAW’s vertical wave zoom and thought “Boy, this is such a bad implementation!” In any case, surely what we hear out of our digital-to-analog convertor isn’t a series of lollipops!

In the end, IMHO, there are workflows that Ardour/Mixbus clearly do better than most DAWs (exporting, for example) but then there are things that people expect to be a certain way. I’m not talking about things done inefficiently; I’m talking about tried and tested efficient workflow. Cutting up regions then normalizing in order to see the waveform (and subsequently returning each to starting volume and stitching back together) isn’t a workflow but a temporary and long-winded approach.

I say all of this respecting that I am not the developer and that clearly Paul, Robin et al. have specific goals and views on what they consider “correct” implementation. I do feel it’s worth sparing a thought for users coming from other popular DAWs which seem to share certain feature sets that have in essence become industry standards for good reason.

If it turns out that vertical zoom is the “correct” approach, I’m fine with Ardour supporting it.

However vertical zooming just encourages workflows that are clearly wrong. e.g. splitting at zero-crossings or cut right at transients. We should discourage those (and provide better tools).

Isn’t splitting a (mono) region at zero-crossings something to be encouraged?  I used the snap to zero-crossing feature in SoundForge to edit podcasts extensively. Cross-fading just before a transient (versus “right at”) is something I use all the time for classical editing as apparently the transient “pre-masks” even a messy fade. Correct me if I’m wrong on this!

I used the snap to zero-crossing feature in SoundForge to edit podcasts extensively. Cross-fading just before a transient (versus “right at”) is something I use all the time for classical editing as apparently the transient “pre-masks” even a messy fade. Correct me if I’m wrong on this!

I agree 100%. But vertical zoom would be better than what Ardour currently provides. If there is a better suggestion please implement it

One of these days I’ll write a blog post, until then prefer to use short fades.

In short, there may be a DC offset, and even if there isn’t, splitting at a zero-crossing only avoids a high frequency jump, but still introduces harmonic distortion.

When converting digital back to analog

a[-2], a[-1], 0, a[1], a[2]

is not the same as

0, 0, 0, a[1], a[2]

(unless a[-2] == a[-1] == 0)

I’m afraid this is not at all clear to me but the English explanation above works just fine  For classical I’ve only ever used short fades. There are classical engineers that only use butt-splices (i.e. no crossfades) but having been immersed in Sequoia and Pyramix’s crossfade editors I could never fathom why they’d want to do that.

For classical I’ve only ever used short fades. There are classical engineers that only use butt-splices (i.e. no crossfades) but having been immersed in Sequoia and Pyramix’s crossfade editors I could never fathom why they’d want to do that.

Have a look at the end of https://xiph.org/video/vid2.shtml (band-limiting and timing) – really the whole video should be mandatory watching for sound-engineer and is time well spent!

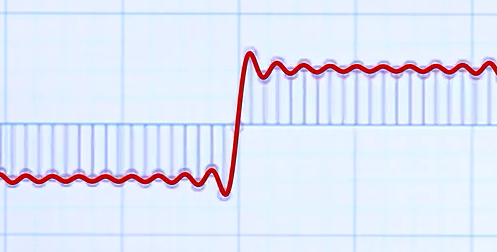

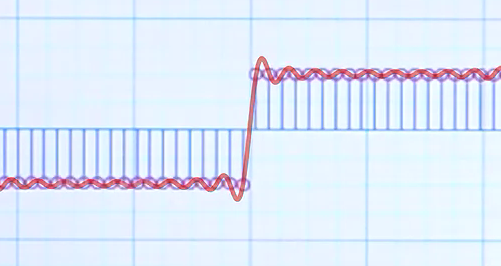

This screenshot if from that video:

If you slice the left half off, you end up with a different signal, since the DAC reconstruction filter includes a low-pass filter.

Compare this to the identical waveform drawn as “perfect” square wave (without the zero sample in the center):

PS. @DHealey was spot on with the meme “there is no zero crossing”

I’ve watched it multiple times in the past but clearly it is something to revisit on a regular basis

Even though I’m familiar with the subject, I also revisited it a couple of times.

Talking about lollipops and looking at those images got me thinking about how a waveform is drawn in a DAW. I always assumed it was drawn point by point and then a curve was drawn over the points - just like Robin’s image. But I’m thinking perhaps that isn’t how waveforms are drawn in Ardour?

I took an audio file and zoomed in (horizontally) as far as I could in Reaper and then again in Ardour. In Reaper the zoom level seems to go much further and I can see dots, which I think are the heads to the imaginary lollipops. In Ardour I don’t see this level of detail which is what lead me to my assumption above.

So if Ardour did draw the waveforms with lollipops and a curve, and we all agree that lollipops are a good representation of the audio, could we then get vertical zooming that is also a good representation of the audio? I assume this is what Reaper (and perhaps other DAWs) are doing.

Drawing waveforms is complex. There are a variety of algorithms.

Unless you draw at a zoom level of 1 sample per pixel, you’re not drawing the waveform itself. Ardour will zoom into 1 sample per pixel, but no further.

Above that zoom level (i.e. N samples per pixel), you’re inevitably just rendering some “version” of the waveform. Most DAWs, Ardour included, draw a “peak view” where there’s a vertical line showing the max and min sample of the N values represented at that pixel position.

I don’t consider the zoom level past 1 sample per pixel to be of much use.

Even if we draw 1 sample per pixel we don’t, see above screenshots of a band-limited signal from the xiph video: The waveform does not correspond to the actual analog signal.

This topic was automatically closed 91 days after the last reply. New replies are no longer allowed.